PDF to Excel Conversions: Practical Guide for Accurate Data

Learn how to convert PDFs to Excel with precision. This educational how-to covers OCR setup, table recognition, data validation, and automation for reliable, repeatable results.

You're about to learn how to perform pdf conversion to excel accurately. This quick guide covers when to use OCR-based vs text-based conversions, how to preserve table structure, and strategies to verify results before saving. By following the steps, you’ll minimize formatting loss, correct misaligned cells, and prepare data ready for analysis.

Understanding the pdf conversion to excel landscape

Converting a PDF into a usable Excel workbook hinges on recognizing what type of PDF you have. Native text PDFs preserve actual characters, making extraction straightforward, while scanned or image-based PDFs require optical character recognition (OCR) to transform images into editable text. The quality of the source document matters: clear column boundaries, consistent row alignment, and standard fonts lead to higher accuracy. According to PDF File Guide, the reliability of pdf conversion to excel improves when you start with clean source files and choose the right extraction tool for the job. In professional settings, analysts often encounter multi-column layouts, merged cells, and embedded images that complicate extraction. Being aware of these nuances helps you set realistic expectations and select methods that retain the essential data structure rather than just the raw text.

To maximize success, establish a baseline by testing a representative sample PDF before proceeding to larger batches. This practice minimizes rework and gives you a clear view of the typical errors you’ll need to fix in Excel. The goal is not just to copy text but to reproduce a usable table that preserves headers, units, and repeated rows. The PDF File Guide team emphasizes documenting the intended output format early—this prevents drift between the source file and the final spreadsheet.

Methods for converting PDFs to Excel

There are several valid pathways to move data from PDF to Excel, each with trade-offs in accuracy, speed, and cost. Text-based extractions work well when PDFs contain selectable text with clearly delineated columns. OCR-based extractions excel when dealing with scanned documents, but their accuracy depends on scan quality and language complexity. Hybrid approaches combine both methods: extracting obvious text first, then applying OCR to troublesome sections. Tools range from desktop software suites to web-based services and integrated features in spreadsheet programs. Adobe Acrobat Pro, ABBYY FineReader, and Microsoft Excel’s Get Data from PDF are popular options, while free alternatives can handle simple tables. The key is to test multiple approaches on your worst-case PDFs and compare results side-by-side to decide which path yields the most reliable data for your workflow.

In practice, a robust workflow often uses a two-pronged approach: (1) extract clean, text-based tables from PDFs with good structure, and (2) run OCR only on pages or sections where text is not selectable. This minimizes errors and reduces manual cleanup time. PDF File Guide notes that consistent formatting in the source PDFs dramatically reduces post-conversion corrections, so when possible, obtain the cleanest possible source for the data.

Choosing the right tool for your needs

The landscape of pdf conversion tools ranges from lightweight, single-purpose apps to comprehensive suites with advanced table detection and batch processing. For occasional tasks, built-in features in Excel or free online converters can be sufficient. For ongoing workflows, investing in OCR-enabled software or enterprise-grade tools pays off through higher accuracy, automation, and better handling of complex tables. When evaluating options, focus on three criteria: accuracy (how well tables and headers are preserved), layout fidelity (retained column alignment and cell borders), and automation capabilities (batch processing, scripting, and API access). Remember to verify support for multi-page PDFs, rotated headings, and merged cells, as these often trip up automated extraction. The PDF File Guide recommends running a small pilot set across several tools to compare performance, then standardizing on the most reliable option for your team.

Additionally, consider the ecosystem: whether you already rely on Excel, Google Sheets, or other data platforms may influence which tool fits best with your existing workflow. If you collaborate with others, preference for widely supported formats (.xlsx, .csv) ensures smoother handoffs and fewer compatibility issues. Documentation and customer support are important indicators of long-term reliability; look for clear guides on handling common table structures and troubleshooting tips.

Step-by-step workflow: manual extraction with OCR

When you’re dealing with a scanned PDF, OCR becomes essential. A thoughtful workflow minimizes errors and makes post-processing simpler. Start by preparing a small test file that includes the typical table types you encounter. Next, pick an OCR-enabled tool that supports table detection and export to Excel-ready formats. Run the OCR on the document, then export to a supplied Excel format. Open the resulting file and verify that headers align with rows, and that numeric data remains recognizable. You’ll likely encounter misreads in dates, numbers with decimals, and currency symbols. Mark these areas for targeted cleanup. The workflow is iterative: adjust recognition settings, re-run OCR on problematic pages, and re-export until the results meet your accuracy threshold. Throughout the process, keep a clean record of the source PDFs and the exact tool settings used so you can reproduce results later. PDF File Guide’s experience shows that a repeatable workflow is essential for teams that handle many PDFs with similar formatting.

Handling data formatting, merged cells, and errors

Even high-quality OCR can leave behind formatting quirks. Merged cells, multi-line headers, and nested tables are common culprits that break simple copy-paste operations. A practical approach is to import the data into Excel with a focus on raw data first, then systematically restore structure using a combination of text-to-columns, delimiter-based parsing, and manual reformatting. When you encounter merged headers, split them into separate rows or columns that reflect the underlying data. For numeric data, check for OCR misreads such as 0 vs O, 1 vs l, or decimal separators that differ by locale. Creating a small validation set helps you detect these issues quickly. The goal is to convert the messy intermediate result into a clean, analysis-ready table with clearly labeled columns and consistent data types. PDF File Guide highlights the importance of establishing a normalization rule set before bulk edits to prevent drift across files.

Validation and cleaning results in Excel

Validation is the bridge between automated extraction and trustworthy data. Start with a header mapping exercise: compare each column title against the PDF's table headers and confirm that they align. Next, run basic numeric checks, such as totals, averages, and counts, to detect anomalous values introduced by OCR. Use conditional formatting to highlight cells that fall outside expected ranges or that contain non-numeric characters in numeric columns. Cleaning steps may include trimming whitespace, standardizing date formats, and normalizing text cases for IDs. Maintain an audit trail: log the original values, the edits performed, and the rationale for each change. A disciplined validation routine minimizes downstream errors in dashboards and reports. PDF File Guide emphasizes documenting each cleaning rule to ensure consistency across future extractions.

Automation and batch processing

Once you’ve stabilized a reliable workflow for a single PDF, extend it to batches. Batch processing saves substantial time when you have recurring formats. Look for tools that support queued jobs, scripting, or API access so you can script the conversion of multiple PDFs without manual clicks. Establish a naming convention for input and output files, and, if possible, create a template Excel file with the desired column structure that the tool can populate. Spin up a test batch before applying to the entire folder to catch issues early. Automation is not a substitute for verification, but it dramatically reduces repetitive work and the risk of human error in large-scale conversions. PDF File Guide notes that a well-constructed batch pipeline can be a game changer for data teams.

Authority sources and best practices

To ensure you’re following industry-standard methods, consult trusted sources. The National Institute of Standards and Technology (NIST) offers foundational guidelines for data integrity and OCR quality metrics. University extension services provide practical tips for handling data from government PDFs and forms. Microsoft Learn’s documentation on Get Data from PDF explains how Excel can connect to PDFs and what to expect in terms of structure retention. By cross-referencing these resources, you’ll build a robust, defensible workflow. For more detailed guidance, download the official PDFs and read the accompanying best-practice notes from these authorities. PDF File Guide also provides practical checklists and templates to help you standardize your process across projects.

Authority Sources

- NIST: https://www.nist.gov

- Extension Service (University): https://extension.illinois.edu

- Microsoft Learn: https://learn.microsoft.com

- Adobe (PDF technology overview): https://www.adobe.com

Tools & Materials

- Computer with internet access(Recommended with at least 8 GB RAM for smoother processing)

- PDF viewer/editor(For opening, selecting, and sometimes annotating PDFs)

- Spreadsheet software (Excel or Google Sheets)(To import, clean, and analyze converted data)

- OCR-enabled conversion tool(e.g., Adobe Acrobat Pro, ABBYY FineReader, or a reputable online service)

- Test PDFs with clear tables(Use samples that reflect your typical data layouts)

- Documentation or access to official guides(Helpful for troubleshooting and reproducibility)

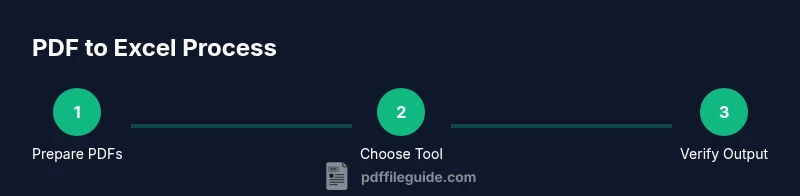

Steps

Estimated time: 60-90 minutes

- 1

Prepare PDFs and test data

Collect a representative sample of PDFs that mirror your typical table layouts. Ensure scanners or sources produce legible text or clear scans. Keep a clean copy of originals for reference and testing.

Tip: Use a sample set that includes mixed layouts (single-line headers, multi-line headers, and merged cells). - 2

Choose the extraction method

Decide between text-based extraction and OCR. Start with text-based when possible; switch to OCR for scanned documents. Hybrid workflows often yield the best balance of accuracy and speed.

Tip: Test both methods on a few pages to compare header retention and numeric accuracy. - 3

Configure table detection

Set up the tool to detect tables, define delimiters, and specify output as Excel (.xlsx). Adjust recognition language and decimal separators to match your locale.

Tip: Enable grid or border detection when available to improve alignment. - 4

Run conversion and export

Execute the conversion and export to Excel. Review the initial output for obvious misreads and alignment issues. Save a temporary file for iterative fixes.

Tip: Keep original PDFs untouched; work on a copy to preserve data integrity. - 5

Validate data and fix issues

Perform quick checks: headers match, numeric data remains numeric, and currencies/dates align with locale expectations. Correct any misreads manually where necessary.

Tip: Use Excel features like Data Validation to catch errors in later steps. - 6

Document and automate

Document the steps you used and consider creating a batch process for future files. Save templates and scripts to enable repeatability across similar PDFs.

Tip: Include a short changelog showing the tool version and settings used.

Questions & Answers

Can I convert scanned PDFs to Excel accurately?

Yes, but you’ll rely on OCR. Accuracy depends on scan quality, fonts, and the tool. Expect some manual cleanup, especially for numbers and dates.

Yes, scanned PDFs can be converted, but OCR accuracy varies and may require cleanup.

Is there a risk of losing formatting during conversion?

Some formatting may be lost during conversion, especially merged cells and complex multi-page tables. Plan to reformat in Excel after extraction.

Yes, formatting can be lost; you’ll often need to adjust in Excel.

What’s better: free tools or paid software?

Free tools work for simple tables, but paid OCR software generally delivers higher accuracy and better batch processing.

Free tools work for simple cases, but paid tools usually give better accuracy and batch support.

How do I verify the converted data?

Cross-check headers, row counts, and numeric values against the source. Use simple formulas to validate sums or counts where appropriate.

Cross-check headers and numbers; use formulas to validate sums and counts.

Can this process be automated for many PDFs?

Yes. Use batch processing, scripts, or API integrations to convert multiple PDFs and apply consistent post-processing steps.

Yes, batch processing and scripting can automate multiple PDFs.

Watch Video

Key Takeaways

- Define the target Excel schema before extraction

- Choose the right tool depending on PDF type (text vs. scanned)

- Validate results with checks for headers and numbers

- Document the workflow for repeatability

- Leverage batch processing for repetitive tasks